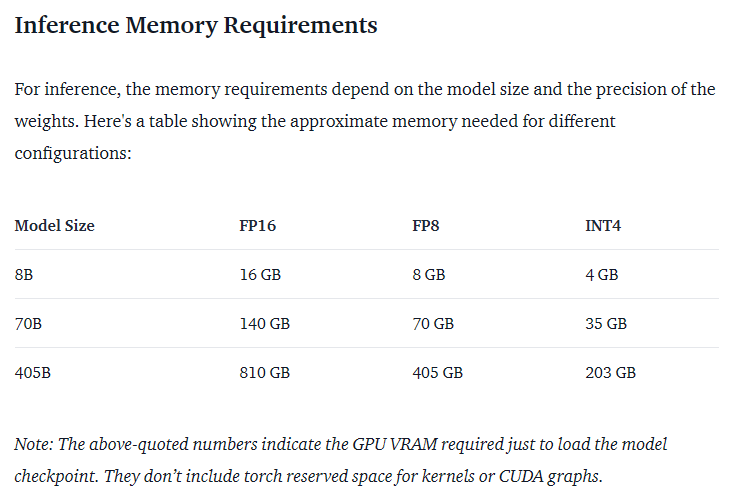

Anyone can download, but practically no one can run it.

With the absolute highest memory compression settings, the largest model that you can fit inside of 24GB of VRAM is 109 billion parameters.

Which means even with crazy compression, you need at the very least, ~100GB of VRAM to run it. That’s only in the realm of the larger workstation cards which cost around $24,000 - $40,000 each, so y’know.

You can get usable performance on a CPU with good memory bandwidth. Apple studios are the best way to get that right now, but a good Epyc with 256GB of RAM works too.

Of course, you could also just run 5 GPUs

“usable” sure if you want to wait 10 minutes a word.

Ouch.

Thanks, I was wondering

I’m envisioning downloading this, then my pc starts to smell bad and spits at me a lot

lol it’s just a program that tries to do everything with any data you throw at it (whatever happened to UNIX philosophy…) but using insane amounts of computing power. General purpose models will always, ALWAYS eventually run into the wall of the second law of thermodynamics.

LLM extensions… yeah we had a tool for that. It’s called an OS and programs.

Until we don’t overcome the limitations of current computers, replacing code with training data and hoping it will be as good and efficient is a naive pipe dream.I’ve heard that performance improves offline. Is it possible to set a model loose on a project and let it iteratively work, or is there a better approach?

If you are interested in code completion, I recommend taking a look at https://refact.ai/. Hosting it (last time I tried) was almost painless, setting up docker to work with your GPU takes some time, but is pretty ok-ishly documented on NVIDIA page, and then you just run a docker and it worked.

It runs a server you can connect to i.e with a VSCode plugin, that will provide code completion or a chatbot (depending on what model you run), and it also has an option to let it loose on your project. You set training hours, give it a git repo (or a zipfile with whole project), and it starts training, which should tailor it towards giving more relevant code completion in the context of the project. I’m not sure if you can do that for the chatbot models, though.

However, I was trying it on my spare gaming PC turned server, that has an unused NVIDIA 1060, and while I could run some smaller models, I wasn’t able to get the training working - the 6Gb of VRAM simply aren’t enough for that. I also tried running it on the PC I work on, but it kept eating like 20-30Gb of RAM for the container, which made it kind of hard to also do anything else on the PC.

However, if you have a spare PC/server with good GPU that can run it, I’d say it’s one of the better ways how to get personalized code completion, that keeps your data local and secure.

As a side note, I think you can give it API keys and let it use online models, but that would kind of defeat the point.